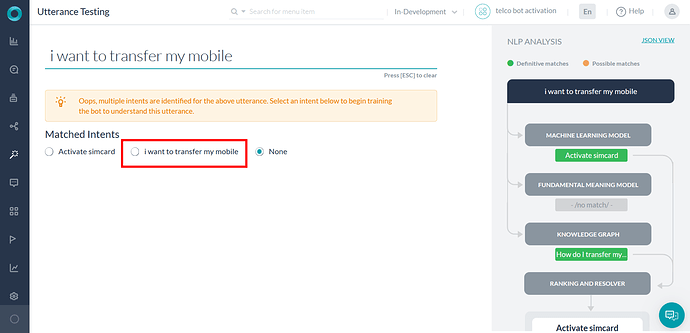

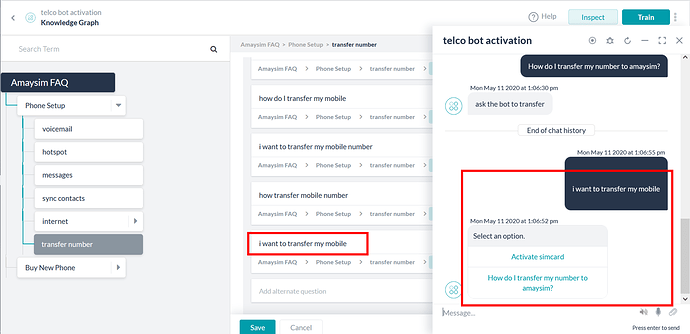

I’ve included the utterance " i want to transfer my mobile" as an alternative in my KG.

When I type this this utterance exactly into the bot, it doesn’t seem to recognise the utterance at first, and gives me 2 options (including the correct one, but also another that is not correct).

When I go into Utterance Testing, the NLP identifies multiple intents. When I match the correct intent and try the bot again with the exact wording (" i want to transfer my mobile"), the bot has not learnt the correct utterance (still gives me 2 options, including correct and incorrect).

Why doesn’t the bot respond correctly to the exact utterance match that I’ve configured?..and why doesn’t the bot learn through testing when I tell it the correct match?

See config/output pics…

thanks!

Richard,

For that utterance there are two definite answers - the ML engine has identified a dialog that matches, and KG has identified a question. As both engines are being definite in their response, then the ranking and resolver does not argue with, and resolve, that.

In this situation, using the Utterance Training scheme to identify a “correct” intent is not going to help because under the covers all that is doing is adding more training to an already definitely identified intent.

What you will have to do is go back through the training for the “incorrect” one and determine why that engine came up with a definitive answer. You can see details about the match by clicking on the gray boxes in the Utterance Testing screen.

Now for an ML sentence, it might be because there is an exact matching training sentence; the thresholds are too loose; it could be there is not great balance and so the words, from ML’s perspective, be only interpreted one way.

Thanks again Andy!

I’ve found that it works for the utterance 100% now…but I haven’t changed anything in the bot setup. It seems that sometimes if I repeat the utterance over and over again, eventually the model gets it right (this has happened for a few different utterances).

There must be something happening in the background I can’t see…it’s a little frustrating but it gets there eventually!